An MVP for a scalable dev tool that writes production-ready apps from scratch as the developer oversees the implementation

In this blog post, I will explain the tech behind GPT Pilot - a dev tool that uses GPT-4 to code an entire, production-ready app.

The main premise is that AI can now write most of the code for an app, even 95%.

That sounds great, right?

Well, an app won’t work unless all the code works completely. So, how do you make that happen? Well, this post is part of my research project to see if AI can really do 95% of developers' coding tasks. I decided to use GPT-4 to make a tool that writes scalable apps with the developer's oversight.

I will show you the main idea behind GPT Pilot, the crucial concepts it's built upon, and its workflow up until the coding part.

Currently, it’s in an early stage and can create only simple web apps. Still, I will cover the entire concept of how it can work at scale and demonstrate how much of the coding tasks AI can do while the developer acts as a tech lead overseeing the entire development process.

Here are some example apps that I created with it created:

Ok, let's dive in.

How does GPT Pilot work?

First, you enter a description of an app you want to build. Then, GPT Pilot works with an LLM (currently GPT-4) to clarify the app requirements, and finally, it writes the code. It uses many AI Agents that mimic the workflow of a development agency.

After you describe the app, the Product Owner Agent breaks down the business specifications and asks you questions to clear up any unclear areas.

Then, the Software Architect Agent breaks down the technical requirements and lists the technologies that will be used to build the app.

Then, the DevOps Agent sets up the environment on the machine based on the architecture.

-

Then, the Tech Team Lead Agent breaks down the app development process into development tasks where each task needs to have:

- Description of the task (this is the main description upon which the Developer agent will later create code)

- Description of automated tests that will need to be written so that GPT Pilot can follow TDD principles

- Description for human verification, which is basically how you, the human developer, can check if the task was successfully implemented

Finally, the Developer and the Code Monkey Agents take tasks one by one and start coding the app. The Developer breaks down each task into smaller steps, which are lower-level technical requirements that might not need to be reviewed by a human or tested with an automated test (eg. install some package).

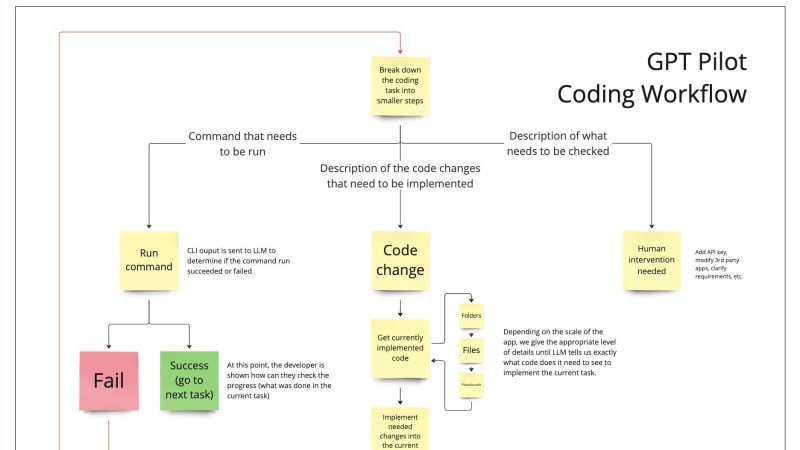

In the next blog post, I will write in more detail about how Developer and Code Monkey work (here's a sneak peek diagram that shows the coding workflow), but now, let's see the main pillars upon which the GPT Pilot is built.

3 Main Pillars of GPT Pilot

I call these the pillars because, since this is a research project, I wanted to be reminded of them as I work on GPT Pilot. I want to explore what's the most that AI can do to boost developers' productivity, so all improvements I make need to lead to that goal and not create something that writes simple, fully working apps but doesn't work at scale.

Pillar #1. Developer needs to be involved in the process of app creation

As I mentioned above, I think that we are still far away from an LLM that can just be hooked up to a CLI and work by itself to create any app by itself. Nevertheless, GPT-4 works amazingly well when writing code. I use ChatGPT all the time to speed up my development process - especially when I need to work on some new technology or an API or if I need to create a standalone script. The first time I realized how powerful it can be was a couple of months ago when it took me 2 hours with ChatGPT to create a Redis proxy that would usually take 20 hours to develop from scratch. I wrote a whole post about that here.

So, to enable AI to generate a fully working app, we need to allow it to work closely with the developer who oversees the development process and acts as a tech team lead while AI writes most of the code. So, the developer needs to be able to change the code at any moment, and GPT Pilot needs to continue working with those changes (eg. add an API key or fix an issue if an AI gets stuck).

Here are the areas in which the developer can intervene in the development process:

After each development task is finished, the developer should review it and make sure it works as expected (this is the point where you would usually commit the latest changes)

After each failed test or command run - it might be easier for the developer to debug something (eg. if a port on your machine is reserved but the generated app is trying to use it - then you need to hardcode some other port)

If the AI doesn't have access to an external service - eg. in case you need to get and add an API key to the environment

Pillar #2. The app needs to be coded step by step

Let's say you want to create a simple app, and you know everything you need to code and have the entire architecture in your head. Even then, you won't code it out entirely, then run it for the first time and debug all the issues at once. Instead, you will split the app development into smaller tasks, implement one (like add routes), run it, debug, and then move on to the next task. This way, you can fix issues as they arise.

The same should be in the case when AI codes.

Like a human, it will make mistakes for sure, so for it to have an easier time debugging and for the developer to understand what is happening in the generated code, the AI shouldn't just spit out the entire codebase at once. Instead, the app should be generated and debugged step by step just like a developer would do - eg. setup routes, add database connection, etc.

Other code generators like Smol Developer and GPT Engineer work in a way that you write a prompt about the app you want to build, they try coding out the entire app and give you the entire codebase at once. While AI is great, it's still far away from coding a fully working app from the first try so these tools give you the codebase that is really hard to get into and, more importantly, it's infinitely harder to debug.

I think that if GPT Pilot creates the app step by step, both AI and the developer overseeing it will be able to fix issues more easily, and the entire development process will flow much more smoothly.

Pillar #3. GPT Pilot needs to be scalable

GPT Pilot has to be able to create large production-ready apps and not only on small apps where the entire codebase can fit into the LLM context. The problem is that all learning that an LLM has is done in-context. Maybe one day, the LLM could be fine-tuned for each specific project, but right now, it seems like that would be a very slow and redundant process.

The way that GPT Pilot addresses this issue is with context rewinding, recursive conversations, and TDD.

Context rewinding

The idea behind context rewinding is relatively simple - for solving each development task, the context size of the first message to the LLM has to be relatively the same. For example, the context size of the first LLM message while implementing development task #5 has to be more or less the same as the first message while developing task #50. Because of this, the conversation needs to be rewound to the first message upon each task.

For GPT Pilot to solve tasks #5 and #50 in the same way, it has to understand what has been coded so far along with the business context behind all code that's currently written so that it can create the new code only for the task that it's currently solving and not rewrite the entire app.

I'll go deeper into this concept in the next blog post, but essentially, when GPT Pilot creates code, it makes the pseudocode for each code block that it writes, as well as descriptions for each file and folder it needs to create. So, when we need to implement each task, in a separate conversation, we show the LLM the current folder/file structure; it selects only the code that is relevant to the current task, and then, we add only that code to the original conversation that will write the actual implementation of the task.

Recursive conversations

Recursive conversations are conversations with the LLM that are set up in a way that they can be used "recursively". For example, if GPT Pilot detects an error, it needs to debug it, but let's say that another error happens during the debugging process. Then, GPT Pilot needs to stop debugging the first issue, fix the second one, and then get back to fixing the first issue. This is a crucial concept that, I believe, needs to work to make AI build large and scalable apps. It works by rewinding the context and explaining each error in the recursion separately. Once the deepest level error is fixed, we move up in the recursion and continue fixing errors until the entire recursion is completed.

TDD (Test Driven Development)

For GPT Pilot to scale the codebase, improve it, change requirements, and add new features, it needs to be able to create new code without breaking previously written code. There is no better way to do this than working with TDD methodology. For all code that GPT Pilot writes, it needs to write tests that check if the code works as intended so that whenever new changes are made, all regression tests can be run to check if anything breaks.

I'll go deeper into these three concepts in the next blog post, in which I'll break down the entire development process of GPT Pilot.

Next up

In this first blog post, I discussed the high-level overview of how GPT Pilot works. In posts 2 and 3, I show you:

How do Developer and Code Monkey agents work together to implement code (write new files or update existing ones).

How recursive conversations and context rewinding work in practice.

How can we rewind the app development process and restore it from any development step.

How are all agents structured - As you might've noticed, there are GPT Pilot agents, and some might be redundant right now, but I think these agents will evolve over time, so I wanted to build agents in a modular way, just like the regular code.

But while you are waiting, head over to GitHub, clone GPT Pilot repository, and experiment with it. Let me know if you are successful, and while you’re there, don’t forget to star the repo - it would mean a lot to me.

Thank you for reading 🙏, and I’ll see you in the next post!

If you have any feedback or ideas, please let me know in the comments or email me at zvonimir@pythagora.ai, and if you want to get notified when the next blog post is out, you can add your email here.

Top comments (39)

Impressive stuff. However...

"Writing code" with GPT or other similar is like going to the gym and having a robot do all the exercises. What do you gain? Very little. It baffles me why any dev would want to work this way? Do you not enjoy the mental stimulation of coding and solving things yourself? Isn't that why u got into this field?

Don't get me wrong, I see value in AI based tools to assist you (autocompleting based on your previous code & project codebase etc.) - I've been using TabNine almost since it existed.

I also see the attraction in building this kind of tool - I get it, it's interesting tech and you're making it do interesting things. I enjoy playing with new toys too.

Using it to carry out 95% of the tasks that I really enjoy doing though? No thanks. That would be job satisfaction and enjoyment straight down the drain.

Hm, yea, I see your point. I definitely enjoy working on this. In terms of why would anyone use this - imagine, you can build a full blown app in 1 week. Imagine, you can ship a new feature every day.

This kind of workflow might be worrying since none of us is used to it but software development will change drastically in the upcoming years. Whether it will be something like GPT Pilot or something else, we'll see, but we definitely won't work like we do today. I think of it like people used to have control over the memory allocation. Today, you almost never think about that. There are memory leaks from time to time but that's so rare that you don't worry about memory anymore.

Unfortunately, it's not rare at all - and the fact that a mentality has developed whereby almost no-one cares about resource usage has gotten software quality and development to the dire place that it is in now. Developers still have control over how they use resources - most are just blissfully unaware of anything in this area, and are oblivious to the fact there are even problems (or that their own actions may be creating said problems)

This kind of generative AI driven workflow is worrying because overall it will only accelerate the decline in quality of skills in upcoming developers, and will essentially turn a lot of us into code reviewers for the generated code (which is often of dubious quality). There will also be a shrinking pool of people who can actually sensibly review this code, due to the increasing shortage of competency created by over-reliance on these technologies.

Overall innovation and original work will also be stifled, as all the good quality, interesting work will be buried under an avalanche of mediocre 'content' (in this case, apps that are 'functional' but likely poorly understood by the people that built them). This phenomenon is visible everywhere (DEV.to being a prime example... there used to be mainly good quality content on here) - and is ruining things in so many areas at an alarming rate.

We're told this is all fine though, because the 'business' guys love this stuff as it is made quickly and cheaply.

I wish I knew how to stop the rot, but it seems like a futile war against $$$

I agree with you in a decline of developers who can create high quality code. I'm not sure how will that play out since GPT Pilot does need a developer to be present. I think that kind of a change is on a long term horizon - 10, 20 years and who knows what will happen by then. Maybe we get to AGI and the whole world turns upside down.

But you do make good points. I guess we'll see what the future holds.

So... machines are things that make work easier. And gyms are places humans go to voluntarily do unproductive work to restructure our tissue as biological organisms to a better condition for more work later. ...Weird analogy you're crafting there.

Why use AI to write software? Because we have many problems that require a lot of inexpensive labor thrown at them to solve. What about that means the work is not a constructive kind because the big metal plates just fall back where they were when you stop applying kinetic energy and relocate them? If we were building a water tower I wouldn't instruct an AI helping such that "and then once you get the water up there just slowly return it to where it originally was and we'll move on to radio towers to end the day so we're fresh for 'suspension bridge day' tomorrow"...?

Apps written through generative AI are not now and never were an intended solution for some overblown "dumbbell average altitude above sea level" crisis, any more than weight lifting was ever a proposed solution to this "help wanted: lifting metric tons of plastic" sign we put up right before the Pacific Ocean ruining the view. But if nobody's going to pick up the garbage for us I guess I can resist the urge to look down on a robot laborer written for this purpose that gets written on my behalf for free? And I'm for sure not ragging on him when he's done for his totally unswolled gains after all that time trying to bulk with like no protein formula in his routine.

This is an amazing idea, thanks for sharing! I've seen quite a bit coding agents, but I think this it the correct approach - LLM collaborating with the developer and asking for help when it gets "stuck". Congrats on the launch!

How long did it take to build it, what was the biggest challenge?

Thanks @matijasos! Yea, I also think that this approach should yield a big productivity boost for devs. It took us 3 weeks for this mvp.

The biggest challenge is just tuning (not fine tuning) of prompts and point GPT-4 in the right direction. I think that this is a biggest work that needs to be done going forward - figure out what prompts work the best.

I guess the prompts one feeds to the LLM's will always be the greatest challenge. We struggle as humans to communicate explicitly with eachother, so trying to tell a LLM exactly what we want will be as big, if not bigger challenge, especially if we start pushing the boundaries a bit. It does make for very interesting and exciting times though. And the sooner we can learn how to apply this tech responsibly and efficiently, the sooner we as a human species would be able to stop looking and fighting "inward" and start turining around as a collective to go out and explore outward.

Watching The Next Decade of Software Development - Richard Campbell - NDC London 2023, I watched with great interest as he demonstrated in an interesting way where we started off about what 40-50 years ago, and pointed out that we are at the end of "Silicon-Street". Soon we wont have atoms left to make smaller transistors with.

And while quantum computing is its still in its very infancy today (comparing it to the first computer memory and processing power capabilities), I am positive that "tomorrow" we will soon be set on a similar path as far as growth of knoledge and technology is concerned when quantum computing truly kicks in.

There's a whole galaxy out there that can be discovered and explored over the next few centuries, and then next it will be our known universe. It's time we stop our little "pre-school tantrums", and look at "primary school". There's so much to learn and gain, vs loosing a stupid classroom fight. Imagine where we can be once we reach some modicum of "adulthood" in our approach to being human?

To take 3D printing next-level from greating all kinds of stuff from raw materials, imagine what we can do when we can start printing with base element atoms? What about Warp engines. The micro experiments scientists have been working on just last year makes the dream of going to WARP 9.5 far more attainable if we harness our energies and focus it on teaching our creations to do some hard thinking at "double quantum-time"! LOL

Impressive stuff. I know a lot of people won't agree with me, but I think this is where development is inevitably going. The time put into churning out code is huge, and businesses are not going to do that if there's a faster, safer alternative that produces the same or better results. We've seen this thousands of time over.

I think as engineers we have a duty to embrace this innovation. There will always be room for humans to guide and fix the machine. It might not be with the interface or tools we have now, but our roles as tech stewards will not vanish unless we refuse to grow.

Kudos for this initiative! I've starred it and look forward to testing it out :)

Thank you so much man for the encouraging words 🙏 🙏

Yea, TBH, I do think that as well. Whether it will be GPT Pilot or something else, we'll see, but I think that a dev's workflow will completely change in the upcoming years.

As you said, we should be embracing innovation and LLMs as a tech opens up so much opportunity to innovate. And I don't think that anyone will lose their job if they adapt to the new tech that will come out.

Looks promising, great work! It's just 1h ago I've written that AI tools are good at small/simple stuff and here we go :)

Would be curious to see how this project evolves. What kind of tooling/IDE integrations there can be, how can it fit in existing workflows of teams that maintain codebases etxc

Yea, great question! We've actually created a VS Code extension which should launch soon - maybe even next week. The way that I see this being used is definitely within the IDE (likely an extension) so that it can assist you when you need to intervene and change some code (which the developer will definitely need to do from time to time).

Looking forward to try the VSCode extension! Btw, in May I've piblished one of mybown, also AI coding assistant: marketplace.visualstudio.com/items...

Oh, awesome, will check it out. Congrats on shipping and 4k downloads!!

Hi, Zvone. Great and very interesting work!

Your work looks extremely promising as you go through explaining how you and your team applied GPT to assist in developing scalable software. May it do well and provoke more insight for you all as well as future users and contributors.

I’m excited to see that TDD is part of the implementation. What I would like to know (I know you are working on designing your own Test platform “Pythagoras” for Node.JS apps) is why not consider having a more generic and decoupled TDD approach? Decoupling all your tests; E2E, Application tests, and Unit tests from any specific Testing frameworks, libraries, etc.? This will create a wider adoption and flexibility for developers wanting to use GPT Pilot via any Testing Frameworks and libraries they prefer.

Another very valuable addition to consider would be implementing a DDD approach forming part of your “User-Story” phase. Significant work has been done in that field too, and I’m sure you folks could benefit from it.

Working a solid DDD approach into GPT Pilot would take this project to a tier not yet publicly available, at least to my knowledge. The thought patterns and tech are already available to enable you to do so, though. Refer to: Clair Mary Sebastian’s article on Medium (Enhancing Domain-Driven Design with Generative AI — 2023) and George Lawton’s on TechTarget (How deep learning and AI techniques accelerate domain-driven design — 2017.) There are additional articles with truly inspiring concepts on related topics on Inspiring Brilliance and TechTarget’s sites as well.

If you can include sound and “flexible” foundations for both DDD and decoupled TDD SW dev approaches, this will push endeavours like yours forward to build truly great systems guided by human developers (both business devs and software devs), capturing two key points in the industry: Design software based on Business models that work, and test the software fully in many, if not all aspects before deploying a single line. That would be a winner!

May your project go very well!

Thank you @andre_adpc 🙏 Yes, I think TDD will be a big part of GPT Pilot - we just need to catch time to implement it correctly, and more importantly, to research what's the best way LLMs work with TDD. I actually started with TDD but realized that it's not trivial to get LLM to be more efficient with it.

I didn't know about DDD, looks interesting. I think that to make GPT Pilot work really well, we'll need to test many of these concepts to see which one works best with LLMs. Same as with TDD.

Very interesting read! It looks like we're on a very similar path. I would love to hear your thoughts on Codebuddy here: codebuddy.ca

I seem to have come to the same conclusion you have about the need for human oversight at every step. This is a working prototype that tries to do what you've done here, also with an array of agents but at a slightly lower level (though much higher than GitHub Copilot).

JetBrains plug-in will be ready soon too! The under served!

Oh nice!! Congrats on the project. I tried to sign up but it seems to request a lot of permissions from my Github so didn't feel comfortable giving away so much. Did you think to ease that up for users so they can try it out more easily?

I assume you need all those permissions for some features, but it might be good to request just the basic perms so people can see what is Code Buddy about (like I wanted to see) and then, you request more permissions once they want to try those advanced features.

Btw, why not open source the project?

Thanks!

As a matter of fact, I went to work implementing this as a result of your comment and I think it's ready! Now you can login using "limited access" and specify a fine-grained token with whatever permissions you want.

We also only really need those permissions for the website and the JetBrains plugin is nearly ready for release so... even more reason to have a reduced permissions option!

Can this be used in an existing projects? Otherwise adoption is going to be difficult. (It's not every day that you start a new project)

Good question - not at the moment. Currently, this is a research to see how many of coding tasks can be done by AI. Once GPT Pilot works completely as described in this post, the idea is to create a tool that map out an existing project by working with a developer (eg. in 1-2 days). Once a project is mapped, GPT Pilot can continue developing on it.

That's very interesting. Looking forward to this. The idea of GPT writing PRs for me to review was intruiging from the very beginning

Good job. Keep it flying.

Thanks 🙏 🙏

Intresting post

Thanks @leowhyx 🙏

This is captivating! The potential of GPT-4 for developers is vast. Eager to see your demo. Keep pushing boundaries! 🌟

Thanks

Thank you for sharing ! I would be awesome if it can explain step-by-step the code, as a student and also programmer it will be a must to learn and stay up to date ❤️

If someone crafts an HTML/CSS design automation tool, they're set for success!

I've bookmarked your repo for later.

Thanks 🙏

Are we not going to talk about how we need to solve the causality problem to make these kinds of tools useful? A next token generator is a useful thing indeed, and will be necessary for an AI to actually write software, but without “understanding” causality these tools will continue to produce mediocre, at best, results.

Most projects are mediocre at the start. I don’t think the purpose (for the millionth time) is to replace the developers or the full software delivery process with generative AI agents. The point is to spend less cognitive effort rebuilding the same basic foundation every single time.

I’d rather spend time on the actual hard problems of the project.

Looks good. I skimmed your blog but didn't use. Monitoring tokens before and after would be nice. I think you're aware the API gives token counts.

Some comments have been hidden by the post's author - find out more